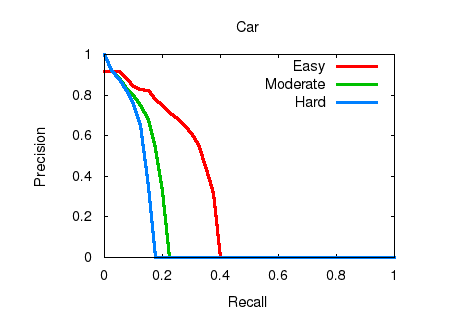

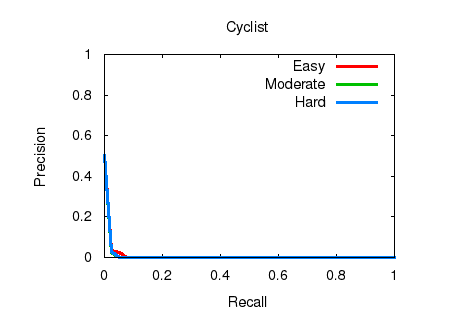

An experiment using the base YOLOv2 416 x 416

detection framework with default weights (without

training on KITTI). The 'person', 'bicycle', and

'car' classes (out of YOLOv2/COCO's 80 object

categories) are considered as 'Pedestrian',

'Cyclist', and 'Car' classes. |

@inproceedings{redmon2016you,

title={You only look once: Unified, real-time

object detection},

author={Redmon, Joseph and Divvala, Santosh and

Girshick, Ross and Farhadi, Ali},

booktitle={Proceedings of the IEEE Conference

on Computer Vision and Pattern Recognition},

pages={779--788},

year={2016}

}

@inproceedings{redmon2017yolo9000,

title={YOLO9000: Better, Faster, Stronger},

author={Redmon, Joseph and Farhadi, Ali},

booktitle={Proceedings of the IEEE Conference

on Computer Vision and Pattern Recognition},

year={2017}

} |